Big Data is taking the world by storm. It plays a vital role in any organization’s success. Right from influencing the company’s plan of action to utilizing it for project growth and success, Big Data matters a lot.

Big Data is, basically, the collection and analysis of information from various sources. It can be either structured or unstructured. Structured data includes SQL databases, whereas unstructured data includes document files and raw streaming data from sensors. To outperform their peers, companies are shifting their focus towards Big Data development.

Wondering why companies are looking forward to adopting Big Data?

- Cost savings- When large amounts of data are to be stored and processed, using Big Data is beneficial.

- Better analytics- It provides better analytics as these frameworks help in processing huge chunks of data.

- Time reduction- The high-speed frameworks and in-memory analytics can easily identify

- Insights- It will help you gain insights on how the industry works and functions.

- Decision making- It helps in organizing large volumes of data and

- Understanding the market- You can get a better understanding of the market conditions by analyzing the information collected.

Big data is everywhere. As data is growing at a rapid pace, it is predicted that by 2025, 163 zettabytes of data will be created, worldwide. Which explains the rise in the number of frameworks in the market. The market is flooded with an array of Big Data frameworks. But, without any doubt, Hadoop dominates over other platforms when it comes to Big Data development. Apart from Hadoop, there are many other prominent and well-performing frameworks that you can use to deploy your Big Data.

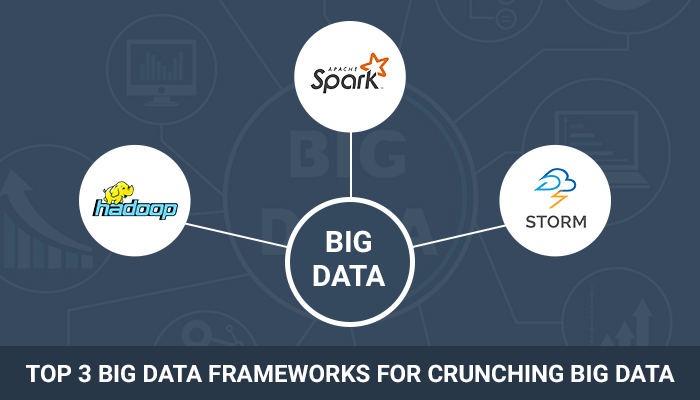

Based on their popularity and usability, these are the top Big Data platforms for processing large-scale data:

1. Hadoop

Hadoop is an open-source framework that provides a distributed way to store Big Data. It can run on-prem or in the cloud. Hadoop has computer clusters and modules that are designed to be fault-resistant.

Hadoop provides a solution to all your problems like storing a colossal amount of data, storing heterogeneous data, accessing and processing speed. Hadoop is used for searching, data warehousing, log processing, video and image analyzing. Hadoop is the best framework for low latency data access and multiple data modification.

The main features of Hadoop are:

- HDFS- Hadoop Distributed File System is oriented at working with huge scale bandwidth. It allows you to load any kind of data across the cluster.

- YARN- Yet Another Resource Generator, is a resource scheduler that manages Hadoop resources. It allows parallel processing of the data stored in HDFS.

- MapReduce- It is a highly configurable model that can process Big Data. The processing logic is then sent to various slave nodes and the data is processed parallelly across the various nodes.

- Hadoop Libraries- They enable third parties to work with Hadoop.

Well, Hadoop is a long-standing champion and is well known for its low hardware requirements. Because of all the features that it offers, it is by far the most popular platform for Big Data.

2. Apache Spark

Apache Spark is a lightning fast, in-memory data processing engine that has the best development API’s. The main aim behind developing Apache Spark was to overcome the shortcomings of Hadoop. This framework allows you to execute machine learning, stream processing and manage SQL workloads.

Apache Spark also has easy-to-use API’s using which you can operate large datasets. Spark can be deployed in a number of ways. It provides native bindings for Scala, Python, Java, R programming. Out of the box, Spark can run in a standalone cluster mode that just requires the Apache framework and a JVM.

Apache Spark can be used for:

- Data streaming- It brings up language-integrated API to stream processing, helps semantics and recovers out-of-the-box data. It is used for complex session analysis, data enrichment, ETL streaming and complex session analysis.

- Machine learning- It handles all the three parts of machine learning, be it clustering, classification or collaborative filtering. Also, it is easy to investigate real-time data packets.

- Fog computing- Fog computing runs programs much faster than Hadoop and helps to write apps quickly in Java, Python and Scala.

With Apache Spark you can easily process structured and unstructured data, calculate large amounts of real-time and archived data and link appropriate graph and machine learning algorithms.

3. Apache Storm

Apache Storm is another framework by Apache specifically for real-time processing and can be used with all programming languages. It is scalable and fault-tolerant. Storm focuses on defining certain small and discrete operations that form a topology which functions as a pipeline for transforming data.

The Storm Scheduler balances the workload between various nodes and is based on the topology configuration. It works well with Hadoop HDFS.

The main features of Apache Storm are:

- Built-in fault tolerance

- Written in Clojure

- The workload is distributed to the nodes

- Works along with Direct Acyclic Graph(DAG) topology

- Auto-restart on crashes

- JSON format for output files

- It has amazing horizontal scalability

Also, storm provides the computation system that can be used for real-time analytics, machine learning and unbound stream processing. The cluster is superficially similar to a Hadoop cluster. But, storm runs on topologies, unlike Hadoop that used Map Reduce jobs.

Apache Storm can be used by small as well as large organizations. It is robust, user-friendly, tolerant and flexible. Storm provides a guaranteed data processing even if the connected nodes in cluster die. Moreover, the best part about Apache Storm is, even if you increase the load, you can keep up the performance by linearly adding resources.

To sum up:

Big Data is changing the current norm in business models. The way organizations make decisions and drive changes is completely influenced by Big Data. It gives an entirely new perspective for managing multiple sources of data, allowing businesses to store more data than it has ever been possible.

In reality, data is coming in at a breakneck speed and comes in many different formats. Also, the importance of Big Data across various industries has tremendously increased as companies need to provide results on the fly.

RSS Feed

RSS Feed